What is the best code of a given length? This natural, but very hard, question motivates the following definition.

Definition: Let denote the largest

such that there exists a [

]-code in

.

Determining is one of the main problems in the theory of error-correcting codes.

Let be a sequence of linear codes with parameters [

] such that

as

, and such that both the limits

and

exist. The Singleton (upper) bound implies

.

Let denote the set of all

such that there exists a sequence

,

, of [

]-codes for which

and

.

Theorem (Manin [SS]): There exists a continuous decreasing function , such that

-

is strictly decreasing on [

],

-

,

- if

then

,

-

.

Theorem (Gilbert-Varshamov): We have . In other words, for each fixed

, there exists an [

]-code

(which may depend on

) with

. In particular, in the case

,

, where

is the entropy function (for a q-ary channel).

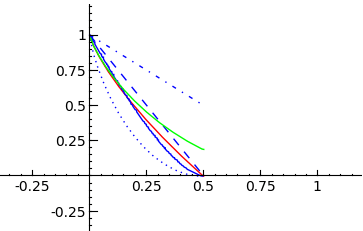

Plot of the Gilbert-Varshamov (dotted), Elias (red), Plotkin (dashed), Singleton (dash-dotted), Hamming (green), and MRRW (stepped) curves using SAGE:

Not a single value of is known for

!

Goppa’s conjecture: . (In other words, the Gilbert-Varshamov lower bound is actually attained in the case

.)

Though my guess is that Goppa’s conjecture is true, it is known (thanks to work of Zink, Vladut and Varshamov) that the q-ary analog of Goppa’s conjecture is false for . On the other hand, we have the following theorem [J].

Theorem: If is true for infinitely many primes p with

then Goppa’s conjecture is false.

The rest of this post will be devoted to explaining the notation in this Theorem. The notation means: “For all subsets

,

holds.” The notation

means: For each non-empty subset

, define the hyperelliptic curve

by

, where

.

It may seem strange that a statement about the asymptotic bounds of error-correcting curves is related to the number of points on hyperelliptic curves, but for details see [J]. Unfortunately, it appears much more needs to be known about hyperelliptic curves over finite fields if one is to try to use this connection to prove or disprove Goppa’s conjecture.

References:

[J] D. Joyner, On quadratic residue codes and hyperelliptic curves. Discrete Mathematics & Theoretical Computer Science, Vol 10, No 1 (2008).

[SS] S. Shokranian and M.A. Shokrollahi, Coding theory and bilinear complexity, Scientific Series of the International Bureau, KFA Julich Vol. 21, 1994.

Here’s how the above plot is constructed using SAGE:

sage: f1 = lambda x: gv_bound_asymp(x,2)

sage: P1 = plot(f1,0,1/2,linestyle=":")

sage: f2 = lambda x: plotkin_bound_asymp(x,2)

sage: P2 = plot(f2,0,1/2,linestyle="--")

sage: f3 = lambda x: elias_bound_asymp(x,2)

sage: P3 = plot(f3,0,1/2,rgbcolor=(1,0,0))

sage: f4 = lambda x: singleton_bound_asymp(x,2)

sage: P4 = plot(f4,0,1/2,linestyle="-.")

sage: f5 = lambda x: mrrw1_bound_asymp(x,2)

sage: P5 = plot(f5,0,1/2,linestyle="steps")

sage: f6 = lambda x: hamming_bound_asymp(x,2)

sage: P6 = plot(f6,0,1/2,rgbcolor=(0,1,0))

sage: show(P1+P2+P3+P4+P5+P6)

If you are interested in computing what c might be in the above statement B(c,p), the following SAGE code may help.

First, the Python function below will return a random list of elements without repetition, if desired) from an object.

def random_elements(F,n,allow_repetition=True):

"""

F is a SAGE object for which random_element is a method

and having >n elements. For example, a finite field. Here

n>1 is an integer. The output is to have no repetitions

if allow_repetion=False.

EXAMPLES:

sage: p = nth_prime(100)

sage: K = GF(p)

sage: random_elements(K,2,allow_repetition=False)

[336, 186]

The command random_elements(K,p+1,allow_repetition=False)

triggers a ValueError.

"""

if (n>F.order()-2 and allow_repetition==False):

raise ValueError("Parameters are not well-choosen.")

L = []

while len(L)<n:

c = F.random_element()

if allow_repetition==False:

if not(c in L):

L = L+[c]

else:

L = L+[c]

return L

Next, the SAGE code computes for a particular choice of the parameters. This gives a lower bound for c.

sage: p = nth_prime(100)

sage: K = GF(p)

sage: R. = PolynomialRing(K, "x")

sage: S = random_elements(K,ZZ((p+1)/2),allow_repetition=False)

sage: fS = prod([x - a for a in S])

sage: XS = HyperellipticCurve(fS)

sage: RR(len(XS.points())/p)

0.975970425138632

Based on experiments with this code, it appears that for “random” chices of S, and p “large”, the quotient is very close to 1.

You must be logged in to post a comment.